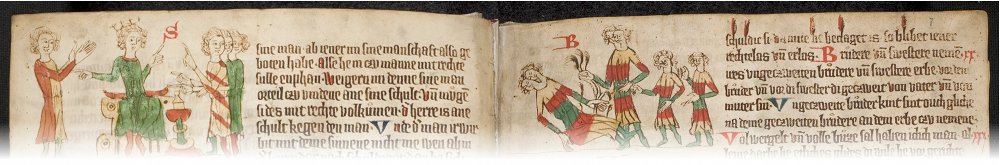

Fig 1. Excerpt from the Heidelberg Sachsenspiegel, ca. 1300

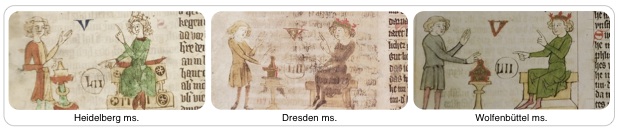

In this project we seek to better understand the purpose and origins of legal gestures depicted in medieval manuscripts. Our approach is based on four illustrated manuscripts of Eike von Repgow's Sachsenspiegel (Mirror of the Saxons), one of the oldest manuscripts on German law. Only a few copies are preserved, each named after its present location. Figs. 1 and 2 show excerpts from a few of these copies.

Fig 2. Example scene correspondence in the Sachsenspiegel manuscripts

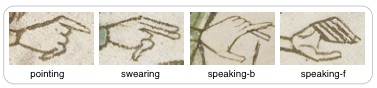

As a first step towards analyzing legal gestures and their usage, we focus on automatically identifying them in images. For this purpose we developed a gesture detection system based on the visual similarity of the gestures' hand-drawn contours. Examples of gestures frequently found in the manuscripts are shown in Fig. 3. The system was evaluated on the basis of this set of gestures in the Heidelberg Sachsenspiegel.

Fig 3. Set of gestures used to evaluate the detection system. Pointing and swearing gestures differ by only a finger. The system differentiates between the front and back views of speaking gestures.

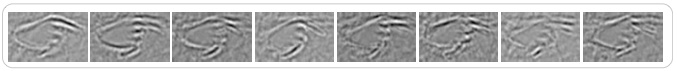

We use a template-based detection system to capture the fine details that distinguish gestures. To model the visual variation within a gesture type, such as pointing, we assemble a representative set of templates. Fig. 4 illustrates the possible variations among pointing gestures. Our detector relies on the Laplacian of Gaussian to create high contrast responses along contours (bottom row of Fig. 4). Aligning a LoG template with a matched gesture results in a strong correlation.

Fig 4. Learned subset of representative pointing gestures. The second row shows Laplacian of Gaussian responses used for template learning and detection.

To learn the representative set of templates for a gesture, we compute the PCA decomposition of a set of training examples. Fig. 5 shows several principle components of the pointing gesture displaying significant variations. We identify the representative set of templates as those with the largest projection on the principle components. The gestures in Fig. 4 represent the characteristic subset of pointing gestures out of approximately 100 instances.

Fig 5. Principle components of the pointing gesture arranged from left to right according to the amount of visual variation they capture

We use an efficient version of normalized cross-correlation with the templates to vote for detections over scale and rotations. Detection hypotheses with a high score are verified with a support vector machine trained over HoG features.

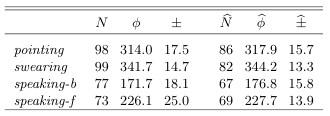

Tab. 1.

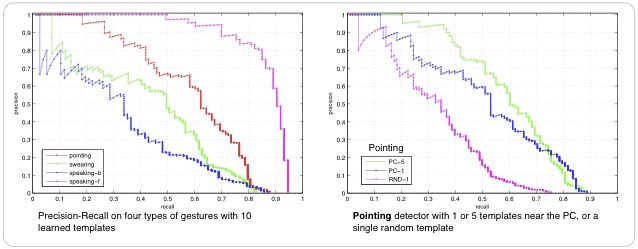

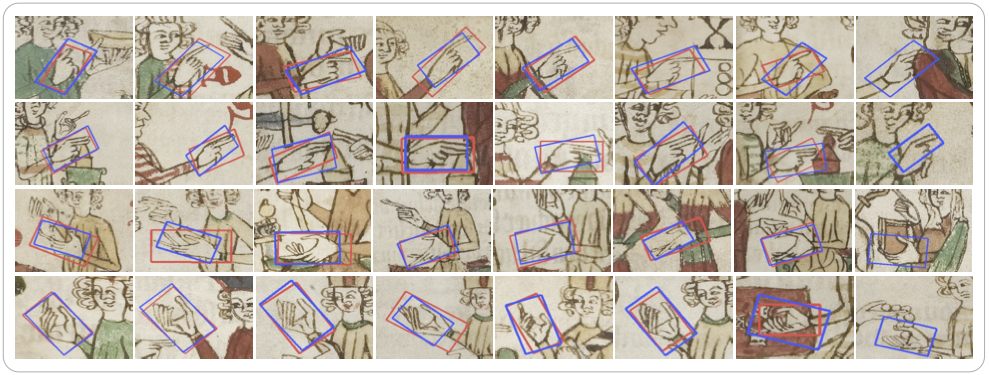

The detection results in Tab. 1 closely reflect those in the ground-truth. The high accuracy in the Precision-Recall curves of Fig. 6 increases for larger sets of learned templates. Example detections for each of the four gesture types are shown in ,b>Fig. 7 The last column of each row in Fig. 7 shows false detections. Notice how the pattern is visually similar to the artist's rendering of the gesture.

Fig 6. Precision-Recall results for the gesture detector (left) and results showing the gain of learning template subsets for detection (right)

Fig. 7 Example detected gestures (blue) compared to ground-truth bounding boxes (red). Each row shows detections from one type of gesture. The last column of each row gives an example false detection.

From here we will evaluate the detection system on other copies of the Sachsenspiegel to provide a systematic comparison of gesture usage. We plan to follow this with high-level scene analysis to automatically determine if gestures are used in a specific legal context.

- Detecting Gestures in Medieval Images by J. Schlecht, B. Carqué and B. Ommer, in:

IEEE International Conference on Image Processing (ICIP), due in September 2011 - Frontier Symposium, Universität Heidelberg, March 2011.

- Nonverbal Communication in Medieval Illustrations Revisited by Computer Vision and Art History by J. Schlecht, P. Bell and B. Ommer, in:

Visual Resources Journal, Special Issue on Digital Art History, 2013

- Heidelberger Sachsenspiegel — Universitätsbibliothek Heidelberg

- Dresdener Sachsenspiegel — Sächsische Landesbibliothek Dresden

- Wolfenbütteler Sachsenspiegel — Herzog August Bibliothek Wolfenbüttel

- Oldenburger Sachsenspiegel — Landesbibliothek Oldenburg