Challenge:

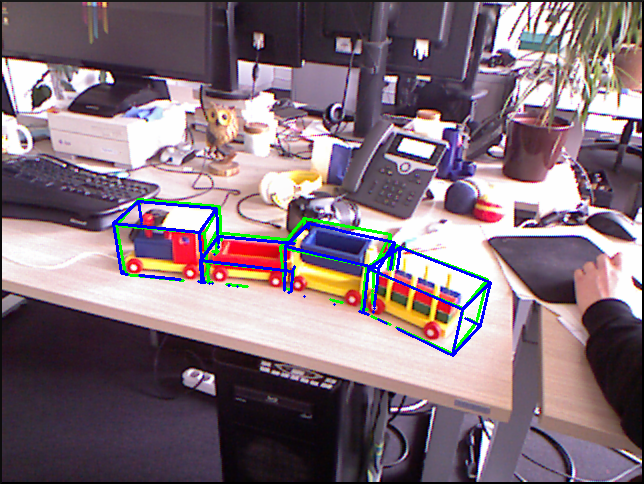

The purpose of this challenge is to compare the different methods of pose estimation for articulated objects. In our dataset we consider four objects consisting of rigid parts connected by 1D revolute and prismatic joints. Given an RGB-D image a method has to estimate the position position and orientation of each part, subject to the constraints introduced by the joints. You can apply your method to our data and submit your results. We will evaluate submitted results according to multiple metrics and display the scores for comparison.

Scores:

| Method | AD | 5cm, 5deg | IOU |

|---|---|---|---|

| Pose Estimation of Kinematic Chain Instances | 89.02% | 59.78% | 91.17% |

| Learning 6D Object Pose Estimation | 29.34% | 8.28% | 40.98% |

Dataset:

You can find our dataset here. Please cite [Michel2015] when using it. See also the description of file formats and folder structure. We provide the dataset under the CC BY-SA 4.0 license.

Evaluation:

We calculate the percentage of correctly estimated poses. The pose of an object is considered correct when the poses of all its parts are correct. We use three different criteria:

- The AD criterion [Hinterstoisser2012]: We calculate the Average Distance (AD) between all vertices in the 3D model of the part in the estimated pose and the ground truth pose. A pose is considered correct, when this average distance is below 10% of the object diameter.

- 5cm, 5deg [Shotton2013]: A pose is considered correct when the translational error is below 5cm and the rotational error is below 5deg.

- The IOU criterion: We calculate the 2D axis aligned bounding boxes of the part in the estimated pose and ground truth pose. We calculate the IOU (Intersection Over Union) of the bounding boxes. A pose is considered correct, when this value is above a threshold of 0.5.

How to participate?

In order to participate you have to:

- Download the dataset.

- Apply your method. You can use anything as training data except the test sequences provided in the dataset.

- Write your pose estimates to .info text files using the exact format and file names as in the dataset. It is described here in section 2.3.3.

- Compress your results to a single .tgz file. Use the same folder structure as in this sample.tgz.

- Email your results to articulation-challenge<at>cvlab-dresden<dot>de. Please provide the name of the method as well as a URL pointing to your project page or publication.

References

[Hinterstoisser2012]: Stefan Hinterstoisser, Vincent Lepetit, Slobodan Ilic, Stefan Holzer, Gary R. Bradski,

Kurt Konolige, Nassir Navab:

Model Based Training, Detection and Pose Estimation of Texture-Less 3D Objects in Heavily Cluttered Scenes. ACCV 2012

[Shotton2013]: Jamie Shotton, Ben Glocker, Christopher Zach, Shahram Izadi, Antonio Criminisi, Andrew Fitzgibbon:

Scene Coordinate Regression Forests for Camera Relocalization in RGB-D Images. CVPR 2013

[Michel2015]: Frank Michel, Alexander Krull, Eric Brachmann, Michael. Y. Yang, Stefan Gumhold, Carsten Rother:

Pose Estimation of Kinematic Chain Instances via Object Coordinate Regression. BMVC 2015.